Four Randomization Traps All Researchers Must Avoid

Oct. 1, 2019, midnight

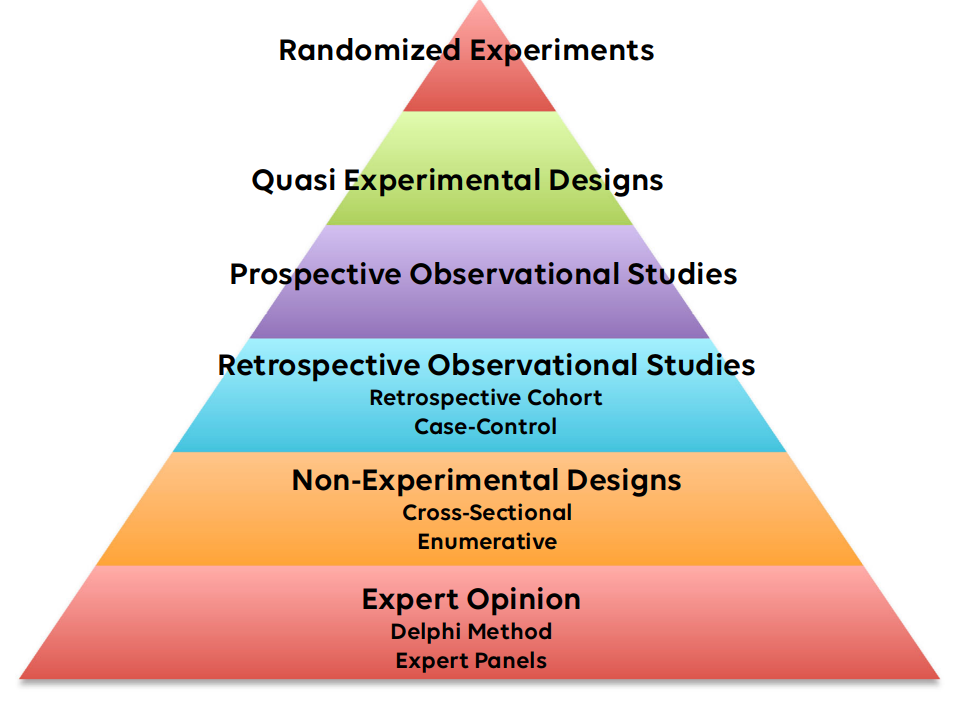

As a statistician my primary goal in all studies is to enable the content expert – the researcher – to develop the best results from their work. Often this means helping the researcher to get higher on the evidence-based medicine pyramid. Aiming for the top placed randomized experiment. Unfortunately, the randomization procedure is not always as easy as it seems. I have seen many good studies fail to reach their true impact simply due to bad randomization. When an experiment is completed using a bad randomization technique there is often no way to fix it in a statistical sense. Peer reviewers are quick to point out bad randomization. This often results in failure to publish the study in the desired journal and failure to make the right impact.

Failure to randomize properly often take the form of one of these four traps that all researchers must avoid: friendomization, convenience sampling, quasi-randomization, and failure to plan.

Friendomization

I have been involved in many studies in disaster medicine. As the disaster medicine community is much smaller than many other areas of medicine, the community often thrives on personal ties to increase the quality of research studies. Multi-center studies are common. Often multiple sites within a country or region are involved to find out the situation in the region. Unfortunately, the sites for these multi center studies are often recruited through personal connections, a process I call friendomization.

Sadly, friendomization is not randomization. Your friends, colleagues, and acquaintances are not a random sample. In fact, it is very likely that they are very biased toward your interests as well. The studies often go well at the outset as the high motivation among your colleagues fuels rapid data collection. The problem comes when trying to make inference about the population. You cannot infer anything about a country or population when the sample taken was biased.

What’s the solution? Avoid friendomization. If you are doing a multi-center study, make a list of all the centers in an area and choose some at random. Use of the randomization procedure will allow you to make inference about the entire population. Not sure how to do this? Talk to your statistician for help on devising the right sampling plan.

Convenience Sampling

Small studies often use convenience samples. I have been involved in many studies that involve training programs for students, residents, or other learners. In one study we were assessing the influence of an educational intervention on a group of medical residents. Since the researchers had limited resources and could apply the intervention only to a small sample, the researchers chose to study a single group of residents in a single class at a single university. This study went very well until the project came to the peer review process. Smart reviewers correctly pointed out that the study results only pertained to the single sample taken. The reviewers objected to any use of p-values or confidence intervals to describe the population. They were right.

Convenience samples are very convenient. The problem comes with the analysis. Unfortunately, statistical theory demands that any inference about a population is based on a random sample: not a convenience sample. No p-values. No confidence intervals. No statistics to infer anything about the population at large. Statisticians who are asked to analyze the data can (and should) inform you that you cannot make any conclusions about the population. If you can coerce the statistician, or you do the statistics yourself, you may make it as far as the peer review process where undoubtedly one of the peer reviewers will recognize the fault.

Quasi-randomization: Random is not Randomized

Small randomized experiments are my preferred methodology for exploring new concepts. In one study we performed, a researcher studied the use of two different triage methods on a group of standardized patients. He divided the participants into two groups by alternating group A and group B in order that the participants were recruited. Sadly, at the publication stage, a very astute peer reviewers spotted instantly that the randomization procedure was inadequate. The reviewers demanded that the study be classified not as a randomized experiment but as a prospective cohort study. Moving down on the level of evidence pyramid. The word randomized was removed from the title. The impact of the study was severely limited.

Random is not the same as randomized. Use of the patients last name, id number, order of recruitment, hair color, or blood type for group assignment is not randomization. Some researchers will call this Quasi- randomization, a term we should all avoid and banish from our vocabulary. Randomization demands that the researchers do something active to randomize. Assessing causation requires a randomized study. Without true randomization the researcher is severely limited in what conclusion can be drawn in the study.

How can this be avoided? When assigning groups for a randomized study pick a simple active way to select groups. This can be as simple as flipping a coin or picking a random sealed envelope from a stack. Numerous online tools can randomize a group of participants. Talk to a statistician if you need help.

Failing to Plan is Planning to Repeat Your Study

A study we performed involved a randomized experiment of two different simulation scenarios. The study design was written in detail for submission to the ethics committee. Unfortunately, we failed to detail in the design exactly how the randomization would occur. A very eager researcher went ahead and divided the patients into two groups: alphabetically by the last name. In this case the fault rested clearly with the statistician (me) for failing to detail to the rest of the team at the outset how randomization would take place.

Sadly, there is no way to fix bad randomization once it is done. No statistics, math, or machine learning algorithm can fix bad randomization. At this point the researchers have two choices. One is to repeat the study with proper randomization. The second is to detail all the faults in the study limitations. This severely limits the potential impact of the study. Simply defining the randomization procedure at the outset can avoid this trap.

Avoiding the Four Traps

As a statistician few things are more disappointing to me than seeing hard working and dedicated researchers fail to make a true impact due to a simple error in study design. Especially when I cannot fix it. In many cases a simple conversation between researchers and statistician before any data is collected is all that is needed.

To make sure your study makes the best impact avoid the four traps of friendomization, convenience sampling, quasi-randomization, and failing to plan.

Are you ready to randomize like a pro? Click here to access our YouTube video on randomization and data collection.

Are You Ready to Increase Your Research Quality and Impact Factor?

Sign up for our mailing list and you will get monthly email updates and special offers.